By Dheeraj S | October 12th, 2020

Creating top-quality content that meets your initial brief is a challenging task when working with even the most experienced writers. Even when using a formal content outline, there is simply too much manual checking involved before the article can be publicly published. This is a huge productivity hog, not to mention the fact that it is pretty easy to miss out on minor quality check details. The need here is that of an automated approach to highlighting content issues based on a list of pre-defined checks. Writers can not submit an article for review unless all the checks have been completed, thereby reducing the back-and-forth.

In this article, we discuss a practical implementation of this approach.

High-level steps in creating blog post content

The normal workflow for developing a blog post goes something like this

- The writer creates an initial outline based on the initial content idea and customer brief

- The customer makes a qualitative review of the text and then prepares comments/modification requests.

- The writer then implements the changes and once approved, the raw text is imported into a CMS like WordPress.

- SEO/Website team member then takes over and ensures proper internal linking (both internal and external), keyword usage, image text, proper content classification, and tags, etc.

- The customer then again reviews the final text in WordPress and if everything ok, the text is finally published.

This whole process is tedious, cumbersome, and can significantly bloat the content costs if you are developing content at scale. Much more importantly though, it can result in severely under-optimized or low-quality content when working with inexperienced writers.

So how can we automate the process with a bit of tech?

This can be done using a two-part approach.

- Develop a list of conditions that must be met before an article can be submitted for review

- Use a content scanner that can automatically flag if any of these conditions have been violated. If so, submission for draft review will be disabled

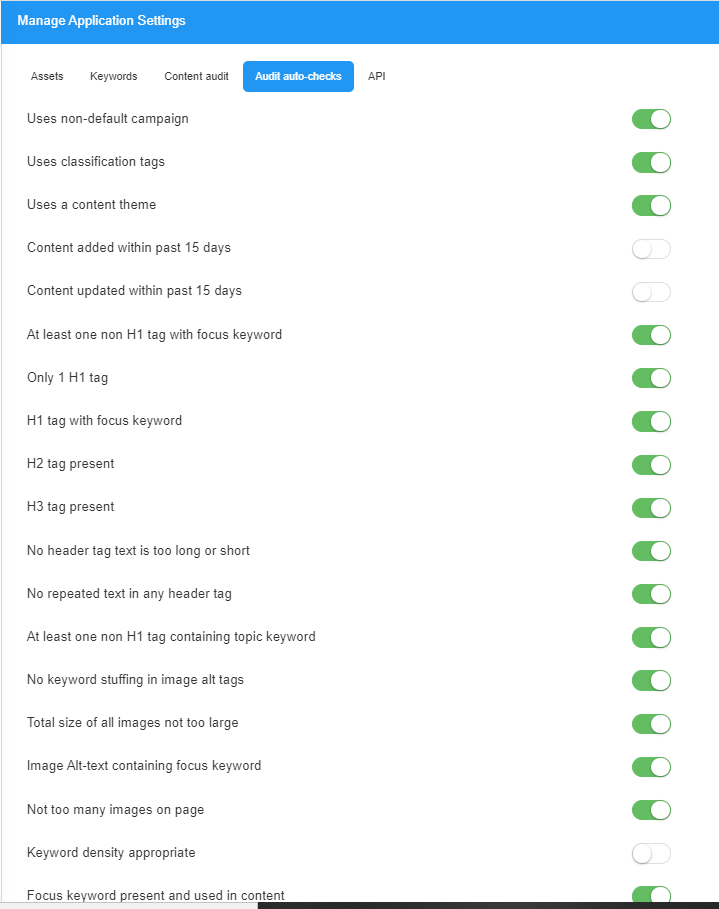

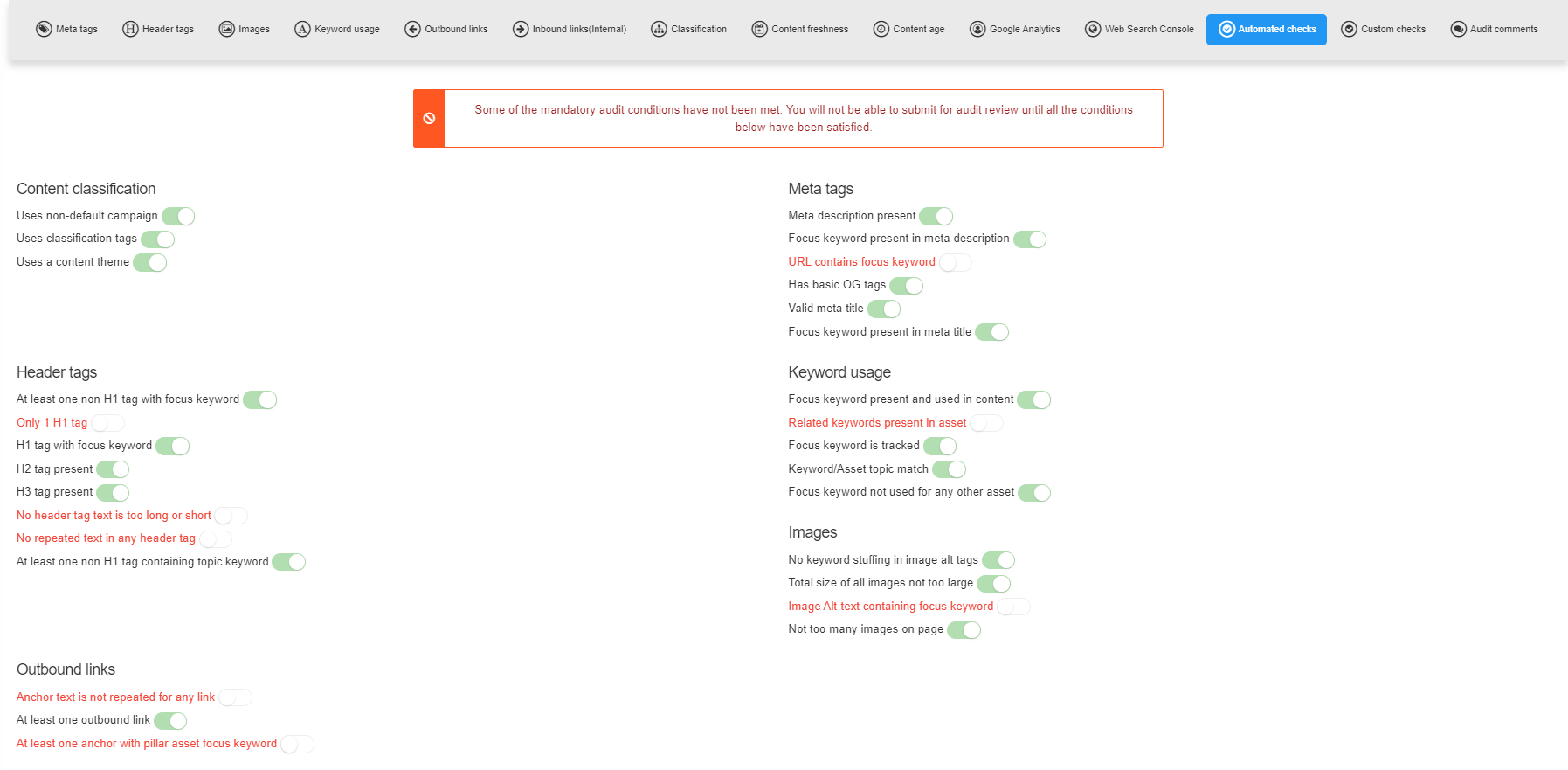

For example, the figure below shows the conditions that you can set for compliance when using the Syptus content audit tool.

Users can enable/disable these based on the level of compliance required. Now when a content item is imported into Syptus, the tool automatically checks these conditions and makes database entries for each condition. When a user visits the content checks section to submit for review, the app shows an error message if any of the mandatory conditions have not been met.

As can be seen, the tool has automatically flagged that some condition has not been met. This will prevent the writer from submitting content for review and he must instead work to make changes to content so that all conditions are met.

Adding custom conditions

The process above works fine for technical checks such as the presence of HTML tags, linking, Image alt-text, classification testing, etc. But what if you had your own checks that you would like to be added before allowing submissions for review? Consider some of these conditions below

- Content must be based on a specific, pre-agreed outline (there should be a ref. number for the outline involved)

- Content must comply with all legal and branding requirements

- It must have been scheduled for the current production cycle (week, month, etc.) in order to ensure that long out-of-turn or ad-hoc items are not pushed into the backlog

These conditions are just illustrative, but the point to note is that the customer can add pretty much any condition that can not be processed algorithmically at the technical level. The writer/content team member would then be forced to ensure that the conditions are met before the content item lands for review in the customer’s inbox.

The Syptus tool forces these checks through what we call Audit templates. Basically, an audit template can include any arbitrary conditions (with a binary result) and which can then be attached to any content item. The writer will then be forced to review and mark each of these as complete before the app can allow submission for review. If the condition is marked complete (or passed) but it turns out to be otherwise at the time of the audit, then the writer takes accountability.

This sort of implicit accountability assignment forces writers to be more thorough in their work before submitting content for audit review.

Wrapping it up

High-quality content that is designed to attract and engage actual customers in a systematic manner, and in full compliance with internal processes, can be difficult to produce at scale without sufficient automation. Putting in automation on the lines described above will allow subject matter experts and content strategists to save a lot of time on multiple iterations of manual reviews and focus instead on research and creating actual content that demonstrates advanced domain expertise.